AI agents are increasingly deployed in high-stakes settings — customer service, healthcare, legal workflows. In these settings, agents engage in multi-turn interactions where decisions unfold over time, and compliance requires satisfying preferences over sequences of actions, not just individual outputs.

Yet compliance today is mostly reactive: agent behavior is audited after deployment, violations flagged after they occur. What regulated industries need is a shift toward proactive compliance — preferences formally specified before deployment, compiled into a verifiable representation, and used to synthesize plans that satisfy them by construction.

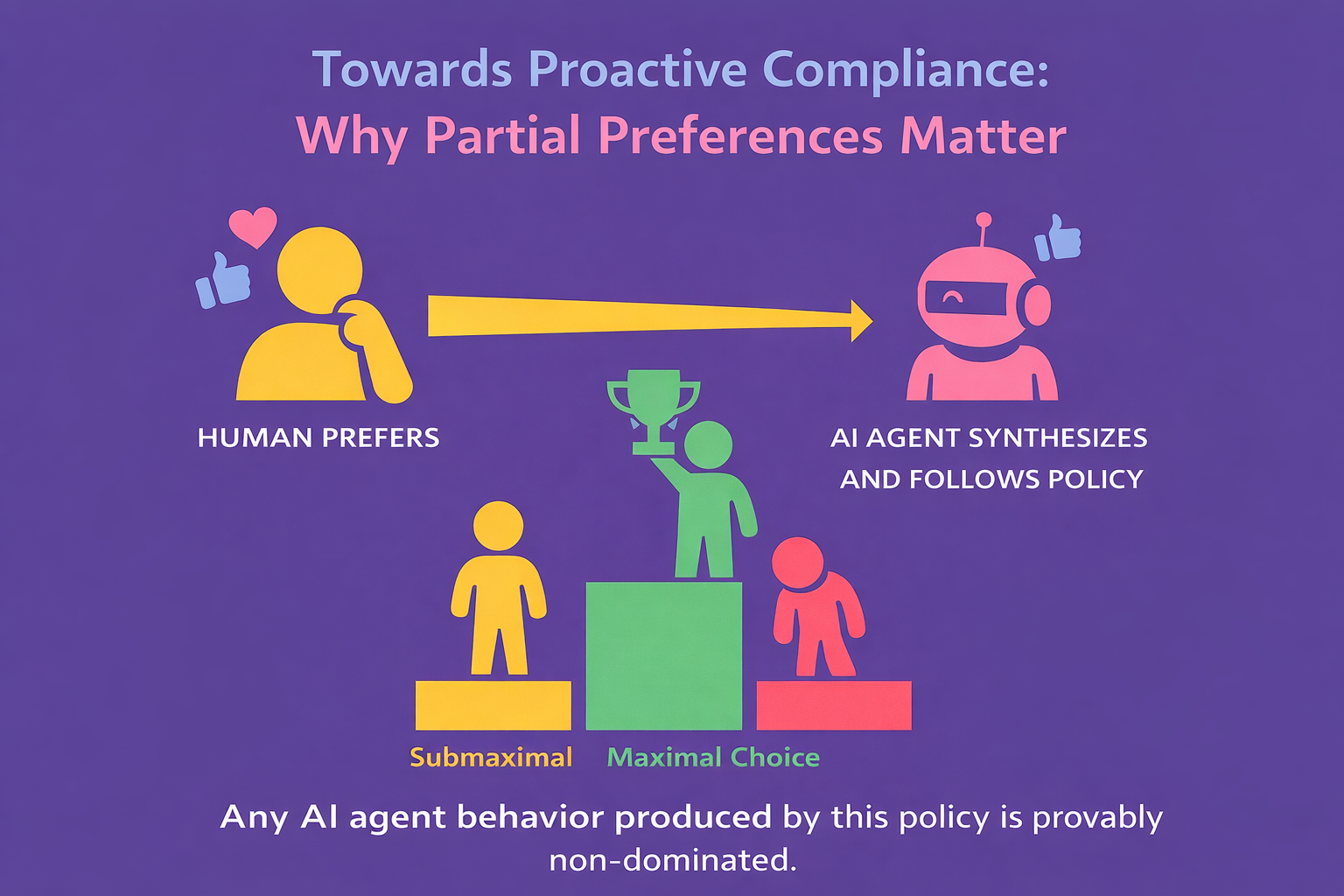

This shift raises a fundamental question: Artificial intelligence (AI) is increasingly expected to make decisions in messy, uncertain environments — from delivery robots to digital assistants. But humans rarely express their preferences in neat, ranked lists. What happens when our priorities are incomplete, ambiguous, or simply incomparable?

A new paper in Automatica by Hazhar Rahmani (Missouri State University), Abhishek N. Kulkarni (Vijil), and Jie Fu (University of Florida) address this problem — introducing a principled framework for synthesizing agent policies that respect preferences over temporal goals, even when those preferences are partial or incompletely known.

As AI systems become more autonomous, the ability to reason under both uncertainty and incomplete human preferences is critical.

This paper shows a mathematically rigorous — yet computationally practical — way forward. By combining automata theory, stochastic dominance, and multi-objective planning, the authors move us closer to AI systems that understand not just what we want, but also act optimally towards satisfying our requirements, by design.

The Problem: Policies Don't Always Rank Everything

Consider a customer service policy: "Resolve every issue within 10 turns. If that's not possible, escalate to a human agent with full documentation."

This implies four possible outcomes shown in following figure:

.png)

The best (O1) and worst (O4) behaviors are clear. But the middle two are incomparable — different kinds of failure with no common basis for comparing them. This structure — a partial order — appears naturally in several organizational policies.

These questions are harder than they appear. Organizational policies are often incompletely specified, and do not always define a clear preference relation between a pair of agent behaviors. This results in partial preferences — where some behaviors are genuinely incomparable, neither strictly better nor worse than each other.

Partial preferences cannot be faithfully represented by any scalar utility or reward function, because assigning numeric values forces comparisons that policy does not explicitly specify. As a result, traditional utility-maximization approaches to decision-making prove insufficient.

Why is this a compelling problem to address?The practical urgency is hard to overstate. Organizations deploying AI agents in regulated industries — healthcare, financial services, legal, insurance — are under increasing pressure to demonstrate that their systems behave in accordance with documented policies and regulatory frameworks.

This research points to an approach where agent plans are constructed to satisfy policy preferences by design, before deployment, with formal guarantees.

The partial-order framework introduced in this paper is a natural fit for exactly that setting, where policies encode structured, and sometimes incomparable temporal objectives that cannot be reduced to a scalar optimization reward. By contrast, existing approaches rely on audits to identify compliance violations after they have already occurred.

The intellectual motivation is equally compelling. Preference-based planning under partial orders was not an ignored problem — it had been studied extensively in deterministic settings. But stochastic environments introduce a fundamental difficulty: an agent policy no longer produces a single outcome, it produces a distribution over outcomes.

Comparing two agent policies therefore means comparing two probability distributions over a partially-ordered space — a problem that sits at the intersection of order theory, automata theory, and probabilistic planning, none of which alone provides the tools to solve it. The fact that this combination had not been tackled in full generality, despite the maturity of each constituent field, made it both a genuine open problem and a technically rich one.

How does the research address this challenge?

The paper makes two contributions that work as a pipeline: the first builds a computational representation of the preference specification; the second uses that representation to synthesize a maximal policy.

The paper tackles this problem by:

- introducing a formal specification framework over linear temporal logic over finite traces (LTLf), which is a structured, English-like formal language for expressing preferences as partial orders over temporal goals,

- an algorithm that converts these specifications into a computational model: a preference deterministic finite automaton (PDFA), and

- an approach that uses this computational model along with MDP to synthesize agent policies that are compliant by design (i.e., Pareto-optimal) with respect to the given partial order.

The paper introduces a framework that goes from preference specification to provably maximal policy:

> Specify preferences → compile to automaton (computational model) → reduce to multi-objective planning in MDP → synthesize policy

What are the implications, and where does the research go next?

For practitioners deploying AI agents today, the most immediate implication is a shift in how compliance can be framed. Rather than auditing agent behavior after a failure, this framework enables a design-time approach. For organizations operating in regulated industries — where demonstrating adherence to documented policies is not optional — this research represents a meaningful step toward verifiable compliance by construction, rather than relying solely on empirical evaluation.

For researchers, the paper opens at least two compelling directions.

The first is online planning for dynamic environments — real-world agents operate in settings where preferences shift, and environments change mid-deployment. Extending the framework to handle evolving preferences without recompiling from scratch is both technically challenging and practically important.

The second, and perhaps most consequential, is the design of a conversational interface that elicits preferences in natural language and automatically translates them into a formal preference model. This would close the loop between human intent and machine-verifiable behavior — allowing non-expert stakeholders to specify what they want from an agent in plain language, and receive back a policy with formal compliance guarantees. The grounding problem this raises — mapping natural language to temporal logic specifications reliably — is one of the active frontiers in neurosymbolic AI.

Both directions point toward the same long-term vision: AI agents whose behavior is not just evaluated against policies, but shaped by them from the ground up — making trustworthy deployment less a matter of hope, and more a matter of engineering.

.png)